Search

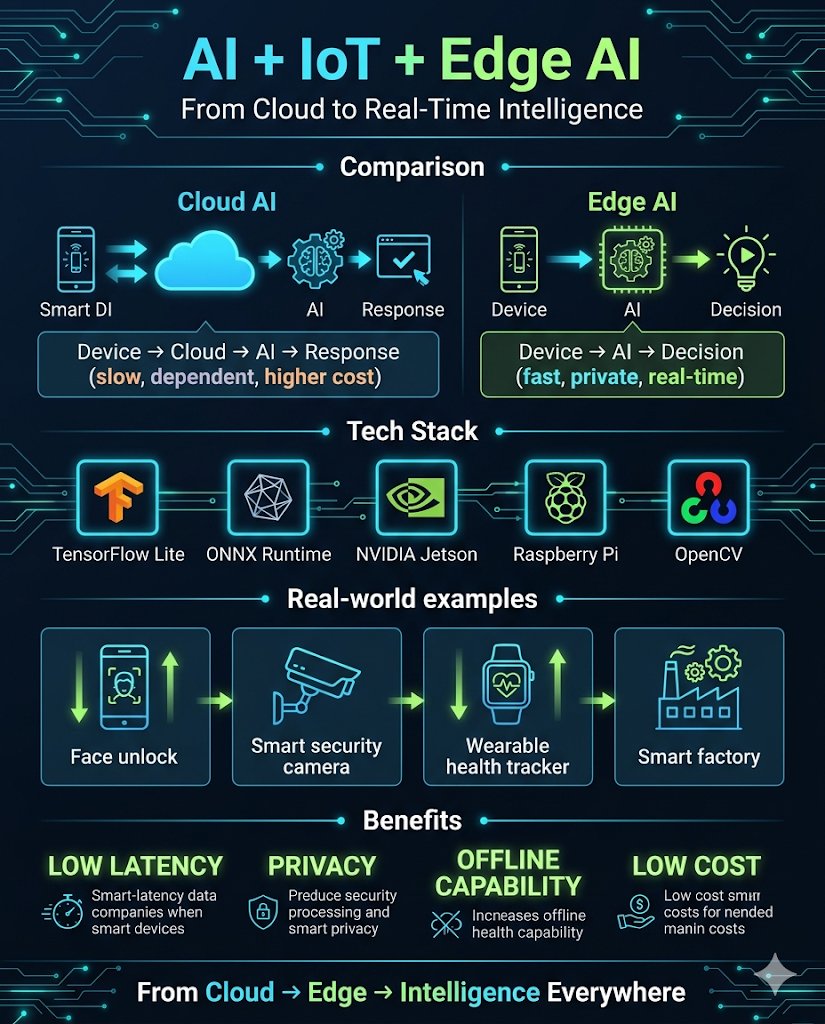

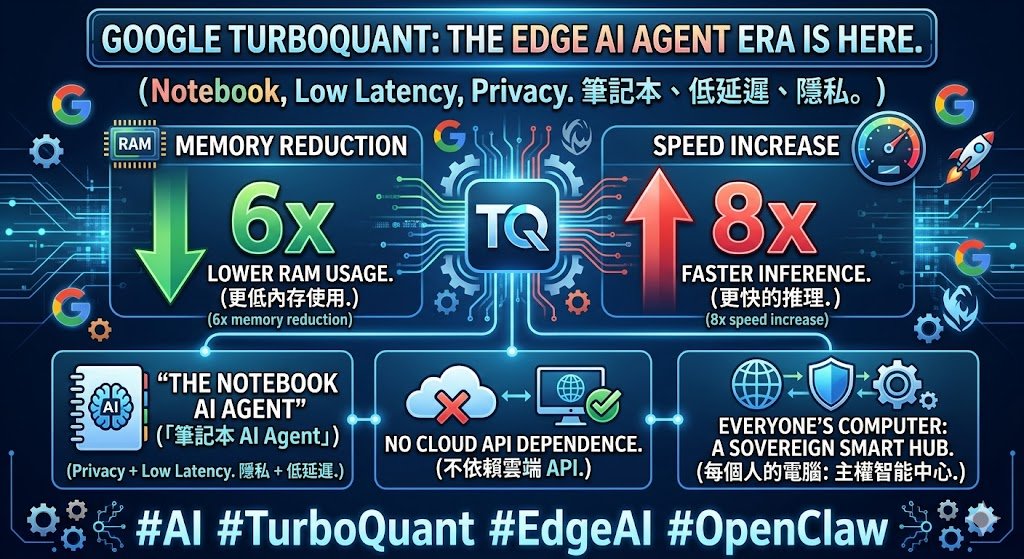

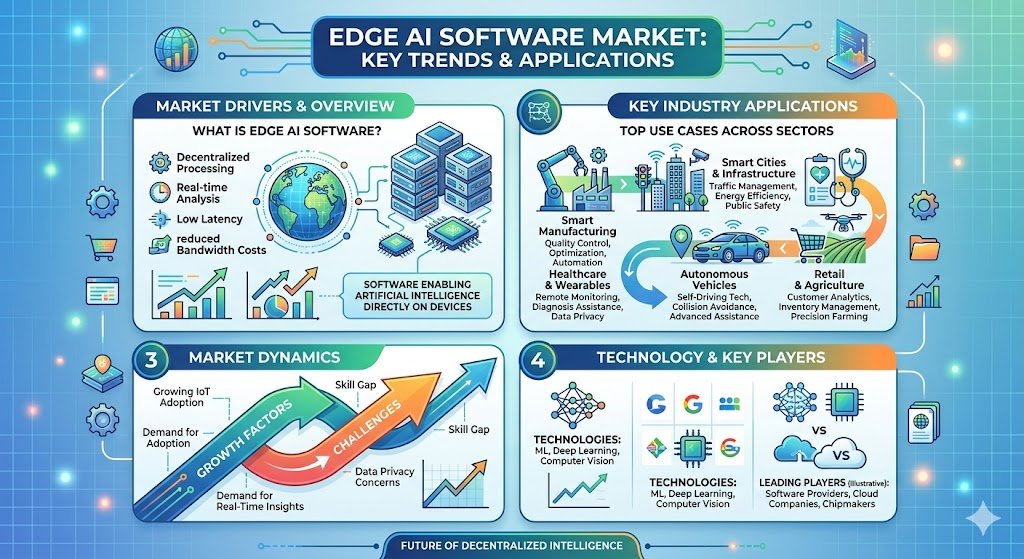

Learn the difference between NPUs and TPUs, and discover which AI accelerator is best for edge or cloud applications.

From gateworks.comWe’re on a journey to advance and democratize artificial intelligence through open source and open science.

From huggingface.coOn April 16, 2026, at 1:00 pm EDT (10:00 am PDT) Boston.AI will deliver a webinar “Remembering to Forget: Agentic Memory Systems and Context Constraints” From the event page: As AI agents evolve from...

From edge-ai-vision.comThe smartest gadget of 2026 isn’t the one with the most features—it’s the one that understands you locally, keeps your data on your device when it can, and only calls home when it must. That’s the...

From vertexknowledge.comMicrochip presents a customizable, target-agnostic solution to program wake words and voice commands. The ML model, generated and tested using a custom application, has low latency and a minimal...

From edge-ai-vision.com