Quick reality check on @Google TurboQuant (the KV cache compression everyone’s buzzing about).

Regular weight quantization (GPTQ/AWQ/QLoRA) already slashes model memory 75% at 4-bit with almost no quality hit.

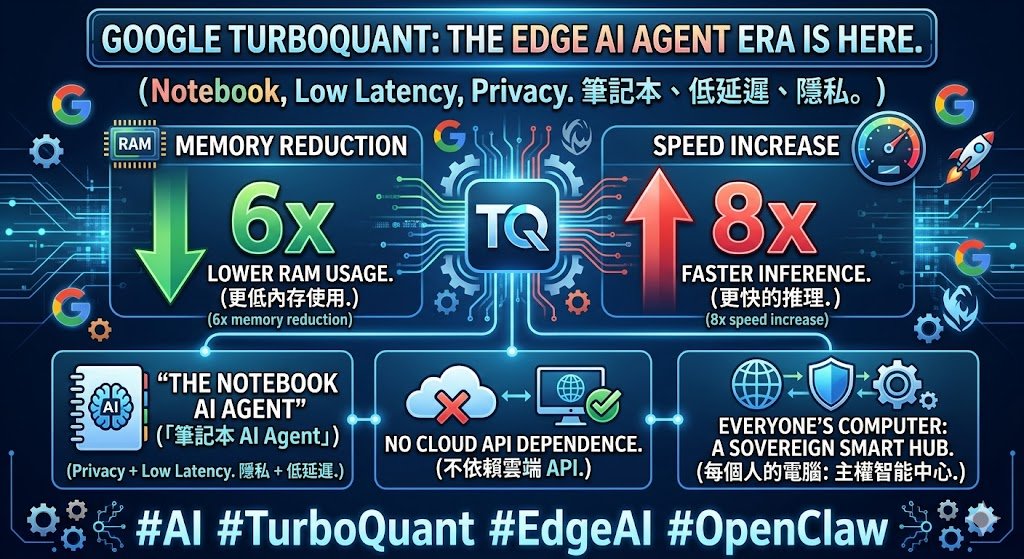

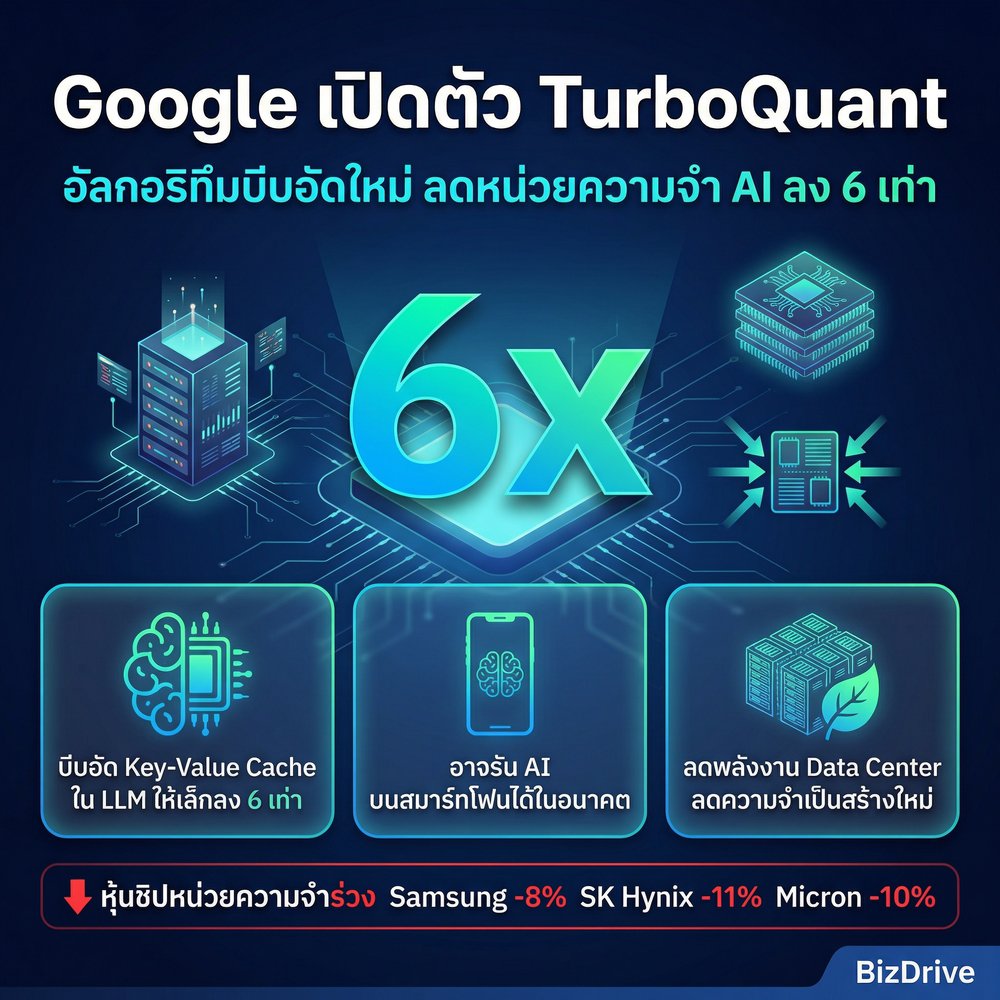

#TurboQuant goes after the other memory hog: the KV cache during long-context inference. Claims 6× smaller cache, up to 8× faster attention on H100s, zero accuracy drop, training-free.

Sounds like the perfect complement.

But there’s drama.

The authors of RaBitQ (a prior method using similar random rotation + quantization ideas, including JL transform) just went public: they say TurboQuant misrepresents their work, uses unfair benchmarks (single-core CPU vs GPU), calls their theory “suboptimal” without proof, and downplays methodological overlap. Issues were flagged pre-submission.

Paper still got accepted at ICLR 2026 and heavily hyped.

11