Post 7/8

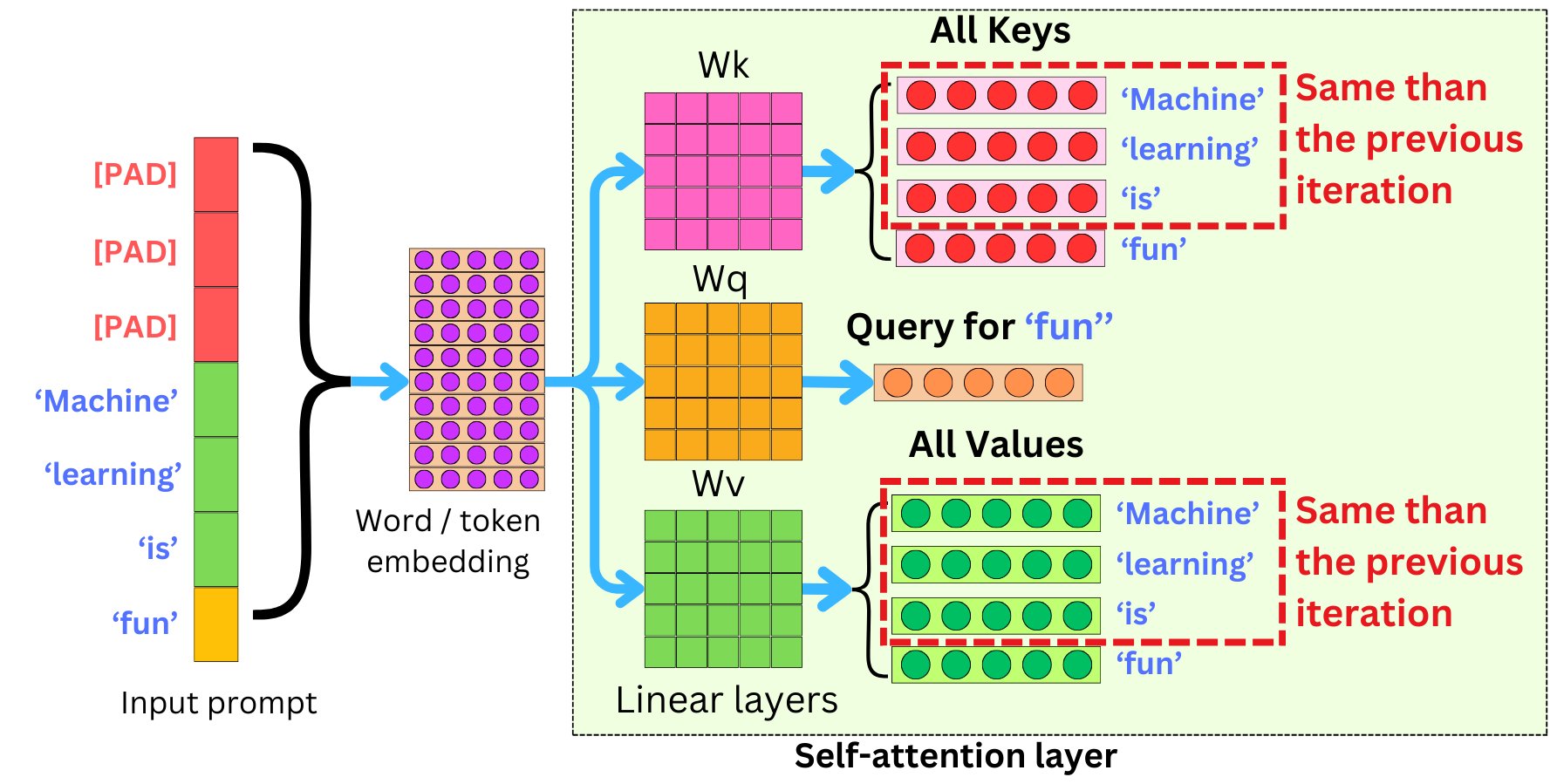

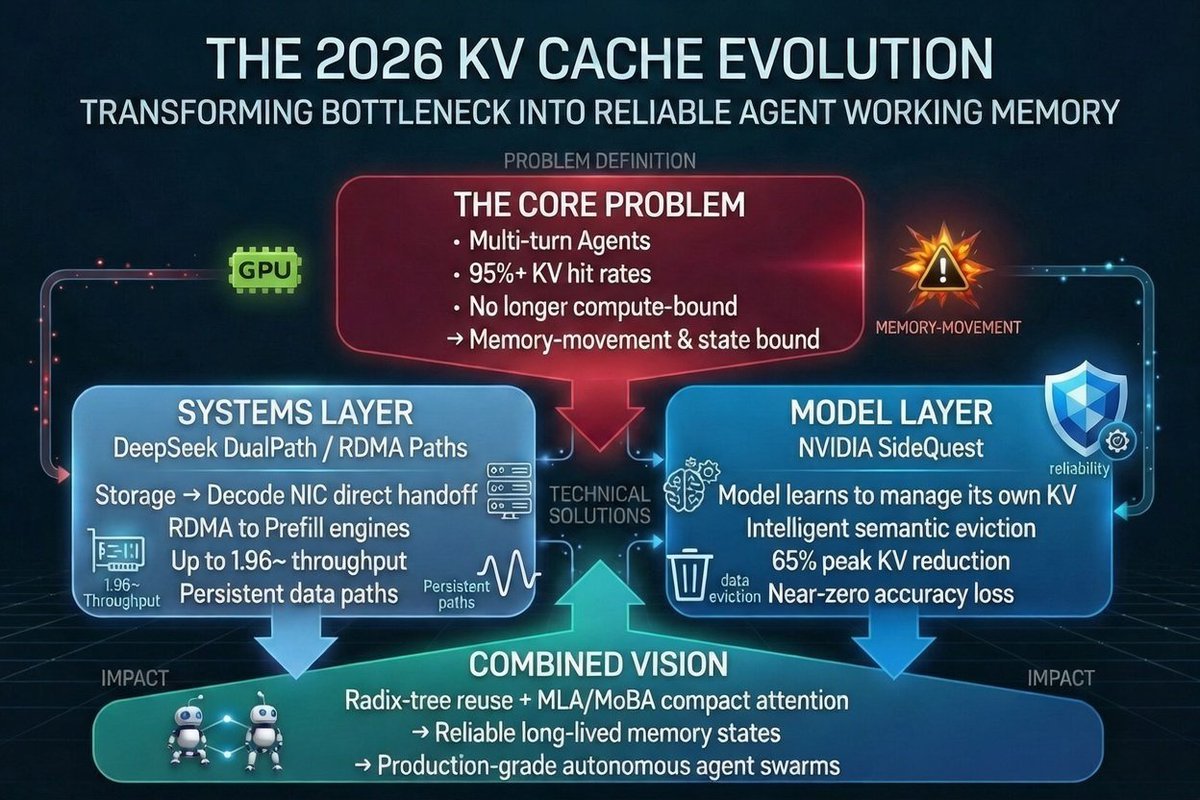

#TurboQuant beats older methods (like KIVI) with:

• Higher vector search recall

• Lower distortion

• Zero fine-tuning needed

• Near theoretical limits

Boosts LLMs + large-scale vector databases & semantic search.

Google is cooking! 🔥

#GoogleAI #KVCache #VectorSearch

1

42