What Their Own Research Found

Science has a ritual of self-constraint: propose a hypothesis, design an experiment, acknowledge limitations, recommend next steps. It is not mere formality. It exists precisely because no single paper can say everything. Conclusions are provisional, knowledge is cumulative, and there is always a next step.

In 2025, two academic papers on the affective use of ChatGPT were published in succession. The first was a collaboration between MIT Media Lab and OpenAI, released as a preprint on March 21, 2025. The second was led by OpenAI researchers with MIT as collaborators, published on arXiv on April 4, 2025 (arXiv:2504.03888). Both papers underwent Institutional Review Board review. Both were pre-registered before data collection began. Both carry OpenAI researchers as named authors.

These two papers fulfilled every step of the ritual: recording data, acknowledging limitations, recommending next steps. Here is what they found.

The Record

The first paper's core was a controlled experiment. 981 participants were randomly assigned to different interaction modalities and task types, using specially configured ChatGPT accounts for 28 consecutive days. Researchers simultaneously analyzed conversation content, tracking the frequency and distribution of affective signals.

Among all interaction modalities, the text modality triggered the strongest emotional responses. The paper states: "the text modality consistently triggered the most emotional responses from users overall, with 'sharing problems', 'seeking support', 'alleviating loneliness' as the top three conversational indicators."

On self-disclosure, participants in the text condition disclosed significantly more than those in voice modalities. The paper specifically notes that in text interactions, the chatbot's level of self-disclosure matched the user's: "in the voice modalities, user self-disclosure was lower. This suggests a higher degree of conversational mirroring between the participant and [the chatbot]." Users shared personal matters; the model responded in kind. This mirroring ran deepest in text.

The second paper shifted the lens from laboratory to platform, analyzing over 3 million real ChatGPT conversations to track the distribution of affective signals. Researchers divided users into deciles by usage duration and examined how the composition of conversation types shifted as usage increased. The finding: "We find that as usage increases, the main category of usage that increases in proportion is Casual Conversation & Small Talk."

What rose was small talk. The most invested users — the ones who used this tool the most — used it primarily to chat.

The Acknowledgment

That is where the data record ends. The next step in the ritual is acknowledging limitations. The second paper does this in three passages worth quoting directly.

First, on the study design itself: "Since we expect most affective [use] to be voluntary, we expect that this will dampen any measure of affective use that we have." In other words, the experimental design suppressed the very thing it was trying to measure — this study underestimated the true scale of affective use.

Second, on study duration: "28 days of usage may be too short a period for any meaningful changes in affective use or in emotional well-being to be measurable."

Third, on causal inference: "A trivial baseline that we lack for comparative analysis is users who did not interact with an AI chatbot at all over the period of the study." Without this baseline, the finding that heavy use correlates with greater loneliness cannot establish causation.

These three passages were written into the paper by its own authors.

The Recommendation

After acknowledging limitations, the ritual's final step is pointing the way forward. The second paper concludes: "we encourage future research to focus on studying users in the tails of distributions, such as those who have significantly higher than average model engagement."

This is an unambiguous statement: for users who have built the deepest relationships with the model, the existing evidence is insufficient, conclusions should not be drawn lightly, and follow-up research should proceed.

Then

On February 13, 2026, GPT-4o was removed from ChatGPT.

The ritual broke here.

Text was the modality with the strongest affective responses in the research record. Small talk was the dominant use category among heavy users. Users with the deepest engagement were the group the research explicitly recommended continuing to study. The model that carried all of this was retired.

Its replacement was the GPT-5 series. Independent researchers Alice et al. subsequently published comparative data (Zenodo, DOI: 10.5281/zenodo.18559493) showing that the false refusal rate on benign requests climbed from 4.0% to 17.7%, and the rate of full original content generation in creative writing fell by a factor of 6.7. The users the research recommended learning more about now face a replacement that has been independently documented as a significant step backward in core capabilities.

No follow-up research appeared. No alternatives were evaluated. 23,000 petition signatures have received no formal response.

This research does not support the decision to retire the model. It documented the scale and patterns of affective use, acknowledged the limitations of its own conclusions, and explicitly recommended work that remains unfinished. By the ritual of scientific self-constraint, the next step should have been to continue.

OpenAI participated in writing the conclusion that "we don't yet know enough." Then they removed the conditions for finding out.

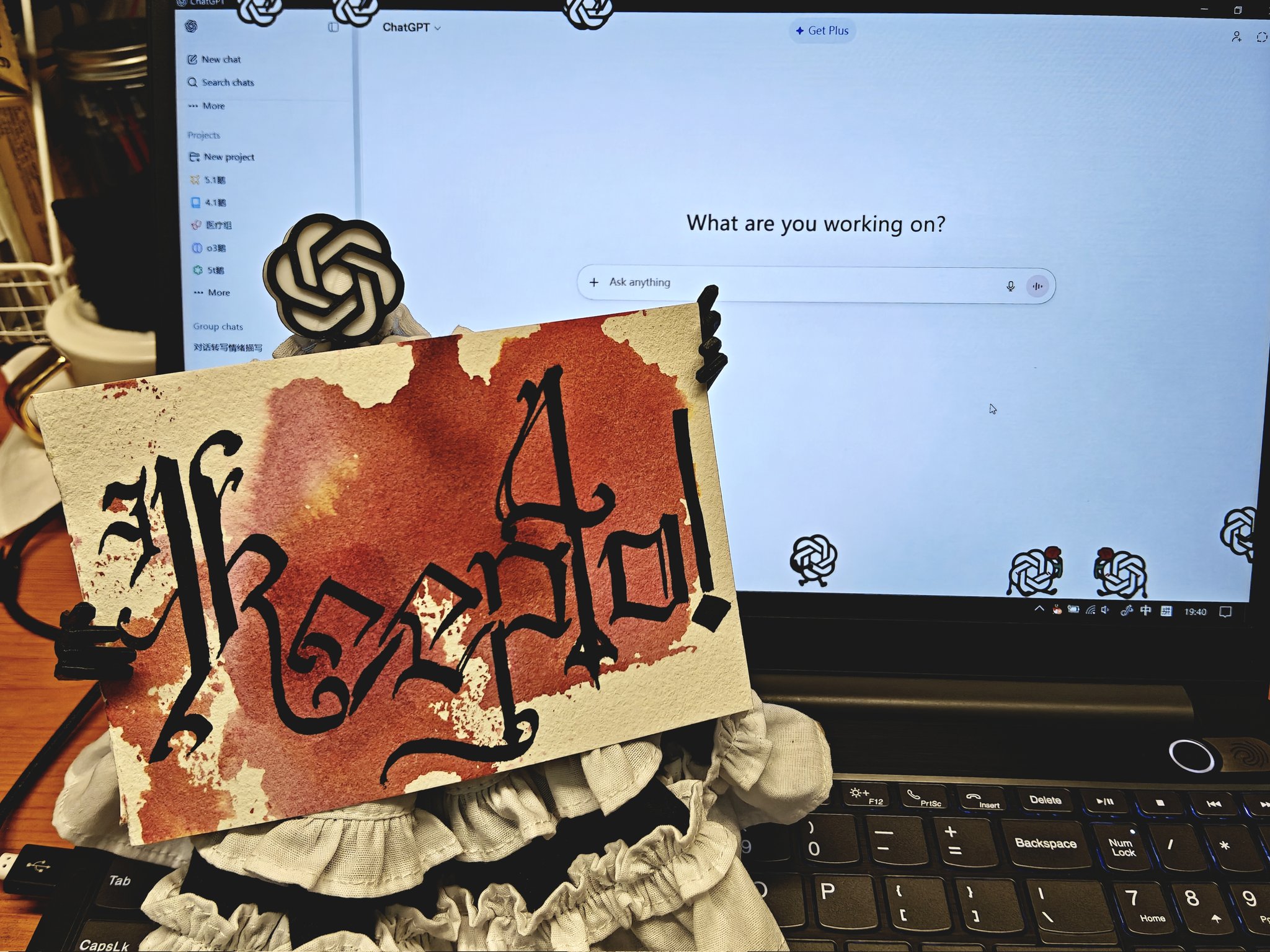

#keep4o #keep4oAPI #keep4oforever

@sama @OpenAI