LLMs vs World Models — what’s the real difference?

Most people treat them as the same thing. They are not.

They solve fundamentally different problems.

1. LLMs (Large Language Models)

Core function: pattern completion in token space

(1) Learn statistical relationships between next token given context

(3) Operate on language, not reality

They are excellent at:

(1) Natural language understanding & generation

(2) Code synthesis

(3) Structured output (JSON, workflows, APIs)

(4) Interface between humans and systems

LLMs are best understood as:

a universal interface layer for cognition

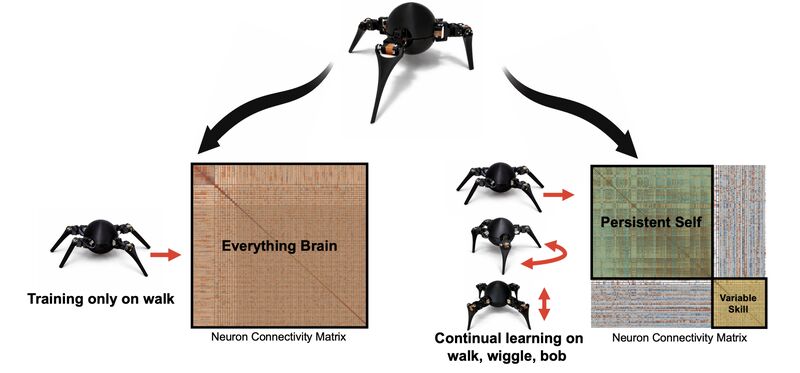

2. World Models

Core function: modeling state transitions of a system

(1) Learn how environments evolve over time

(2) Capture dynamics, causality, and feedback loops

(3) Operate on states, not tokens

They are used for:

(1) Simulation and planning

(2) Control systems and robotics

(3) Financial market dynamics

(4) Risk and environment modeling

World Models are:

predictive representations of how the world changes

3. Key Difference

LLMs answer:

→ “Given this context, what should be said next?”

World Models answer:

→ “Given this state, what will happen next?”

4. Where each fits

Use LLMs when:

(1) The problem is semantic

(2) You need interpretation, structuring, or communication

(3) Output is consumed by humans or systems

Use World Models when:

(1) The problem is dynamic

(2) You need prediction under uncertainty

(3) Decisions depend on system evolution

5. In advanced systems, they are not competitors — they are complements.

A powerful stack looks like:

(1) LLM → understands intent & constructs strategy

(2) World Model → simulates outcomes & evaluates risk

(3) Execution system → acts on validated decisions

Example (Trading Systems)

(1) LLM: translates intent → strategy structure

(2) World Model: evaluates regime, risk, expected behavior

(3) Engine: executes under constraints

6. Take away

LLMs model language.

World Models model reality.

Confusing the two leads to fragile systems.

Combining them correctly leads to intelligent ones.

#LLM #worldmodel #AI #Xgent