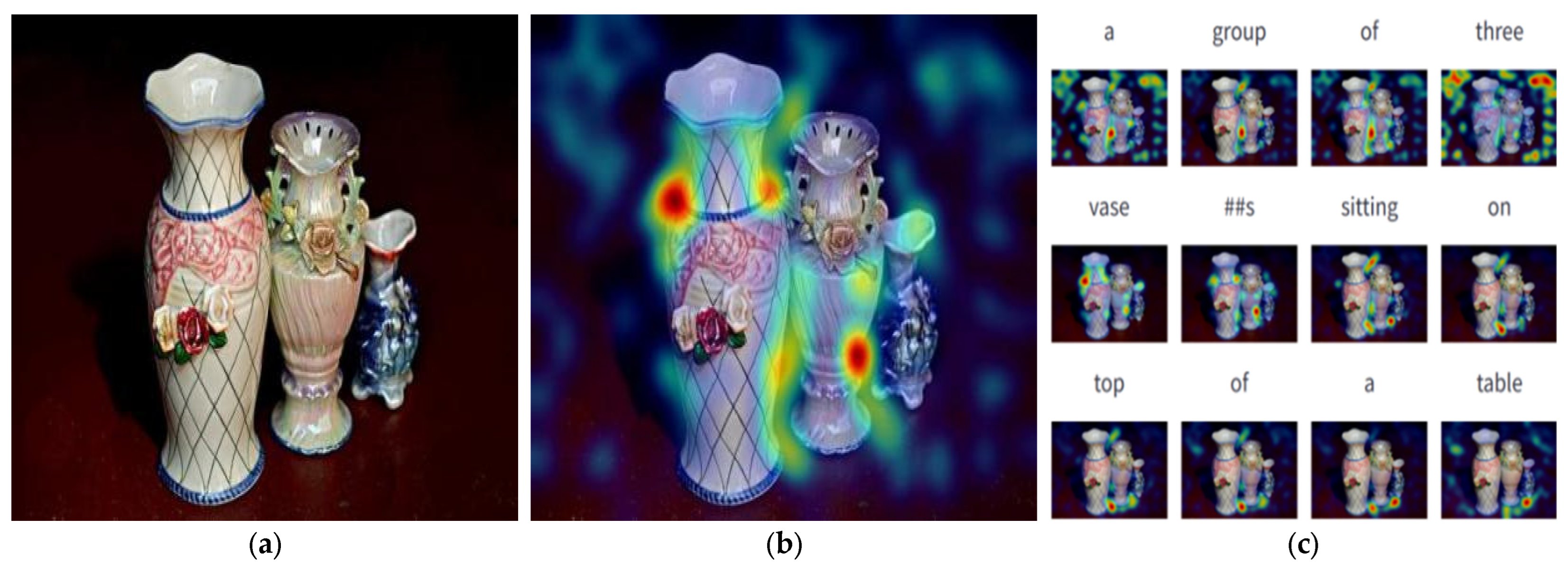

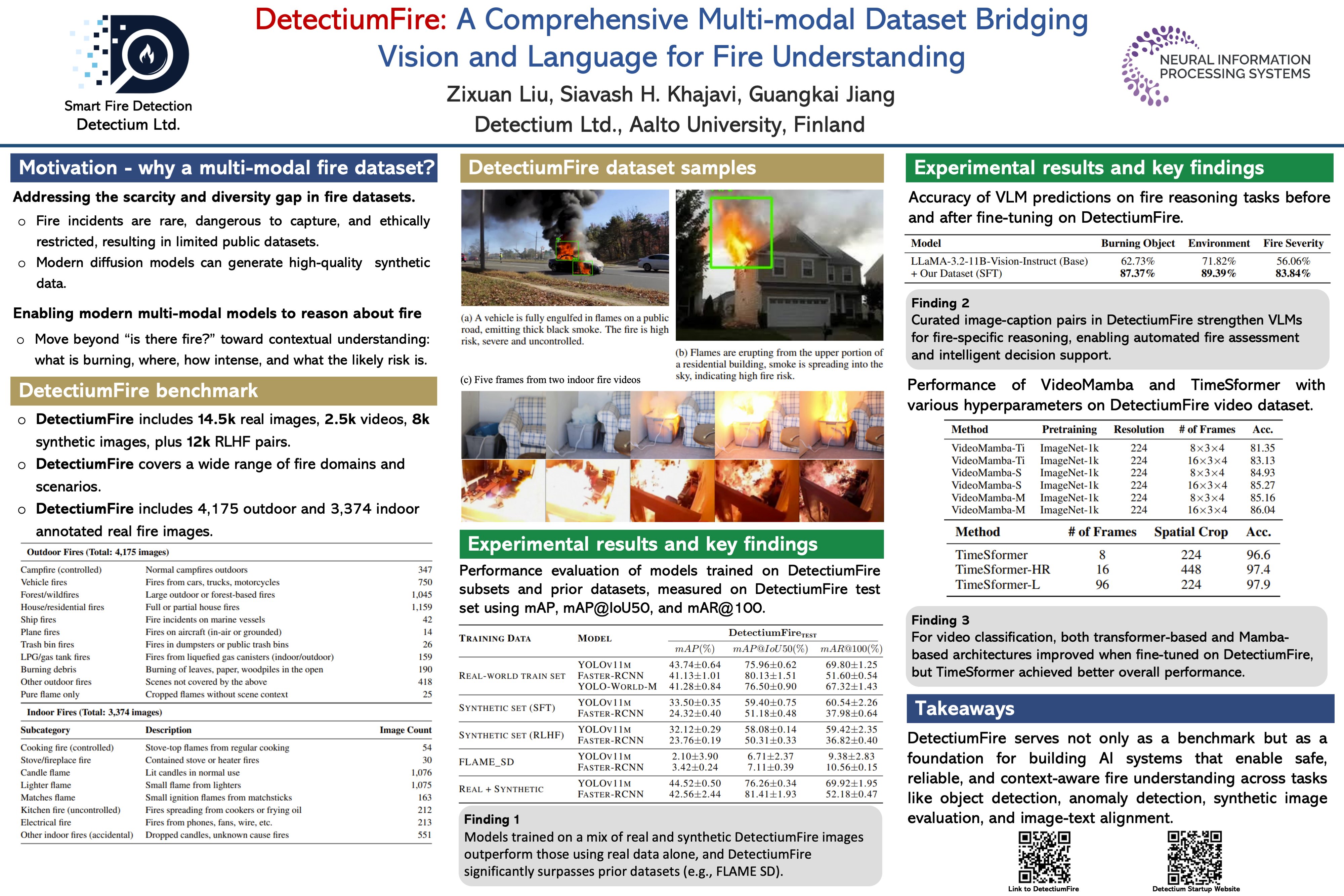

Multimodal Large Language Models (MLLMs) like MMLU and SwinBert are pushing the boundaries of language and vision integration.

But, as Loc3R-VLM demonstrates, they still struggle with spatial understanding & viewpoint-aware reasoning. #VisionLanguage

14