The Neuroscience of Transformers

arxiv.org/abs/2603.15339

#Transformers #NeuroAI #ANNs #BNNs

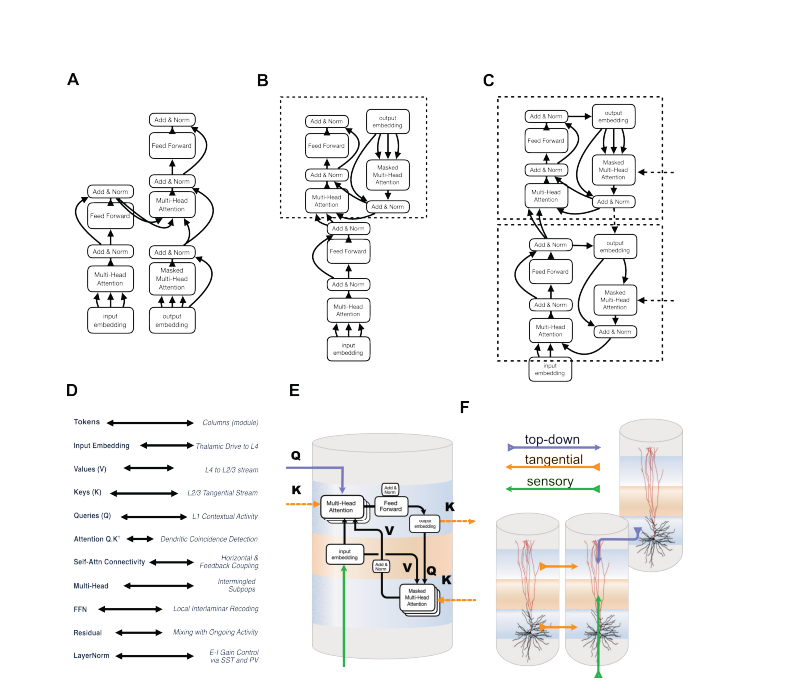

In this study, authors explored the idea that modern AI architectures, specifically transformers can provide new insights into how the brain’s cortical columns function. While inspired artificial neural networks, the authors reverse this relationship by using transformers as a conceptual framework to interpret cortical microcircuits.

They propose an analogy (not a literal equivalence) between transformer operations and the layered (laminar) structure of the cortex. Through this mapping, key computational functions—such as contextual selection, information routing, recurrent processing, and layer-to-layer transformations—are reinterpreted in terms of cortical circuitry.

This framework generates testable hypotheses about brain function, including:

1) Specialized roles of cortical layers

2) Mechanisms of contextual modulation

3) Dendritic integration of inputs

4) Oscillatory coordination across circuits

5) Effective connectivity within cortical columns

Rather than claiming a definitive model, the authors present a structured hypothesis that helps unify AI and neuroscience at the level of computation. Overall, the work argues that comparing brain circuits with modern AI architectures can lead to deeper insights into both biological intelligence and artificial systems.1)