A PAC-Bayesian approach to generalization for quantum models.

We take steps towards non-uniform and data-dependent bounds for generalization of quantum machine learning models.

scirate.com/arxiv/2603.229…

In detail,

#generalization is a central concept in machine learning theory, it is predominantly analyzed through uniform bounds that depend on a model's overall capacity rather than the specific function learned. These capacity-based uniform bounds are often too loose and entirely insensitive to the actual training and learning process. Previous theoretical guarantees have failed to provide

#nonuniform, data-dependent bounds that reflect the specific properties of the learned solution rather than the worst-case behavior of the entire hypothesis class.

To address this limitation, we derive the first

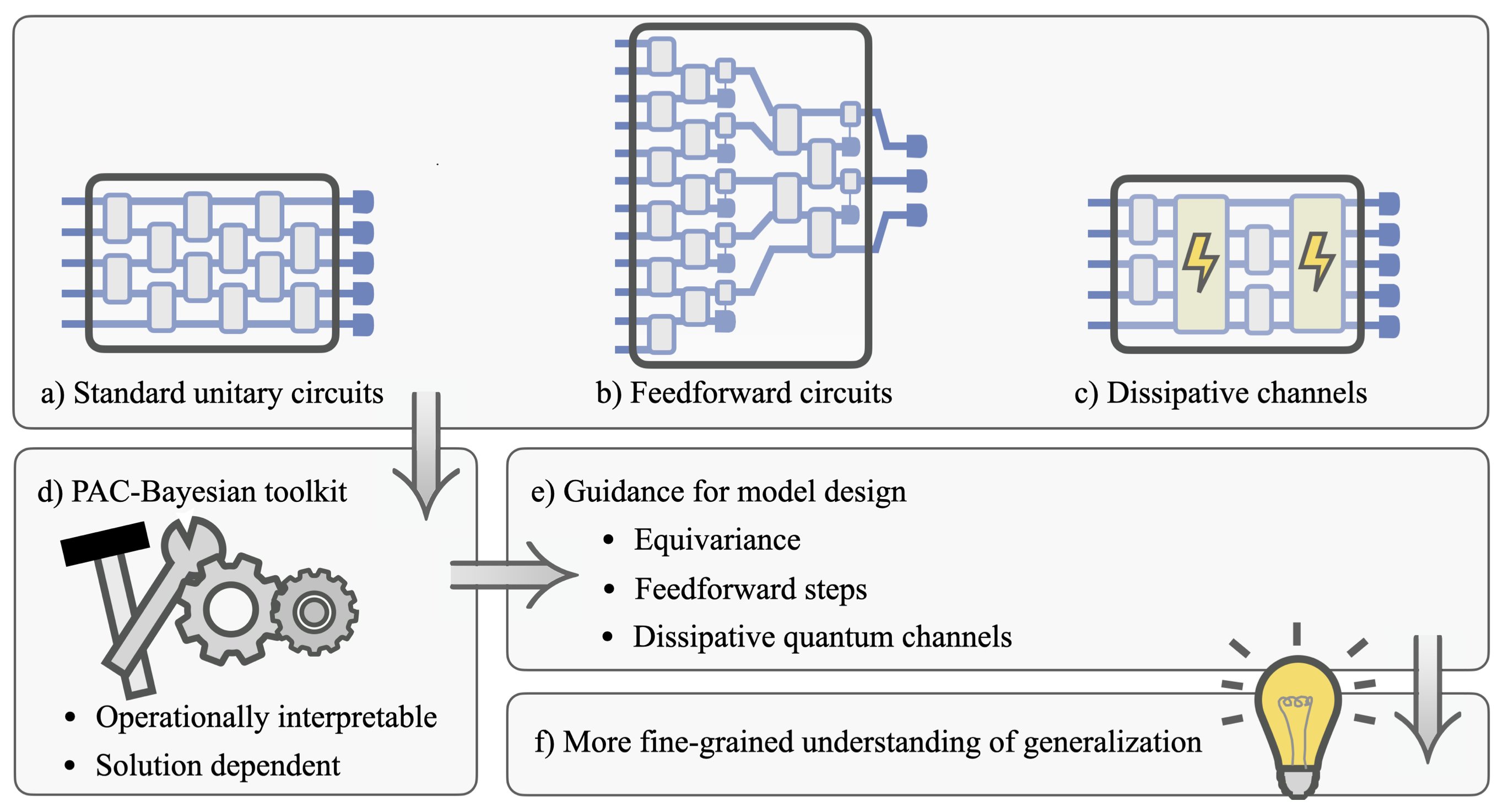

#PACBayesian generalization bounds for a broad class of quantum models by analyzing layered circuits composed of general quantum channels, which include dissipative operations such as mid-circuit measurements and feedforward.

Through a channel perturbation analysis, we establish non-uniform bounds that depend on the norms of learned parameter matrices; we extend these results to symmetry-constrained equivariant quantum models; and we validate our theoretical framework with numerical experiments. This work provides actionable model design insights and establishes a foundational tool for a more nuanced understanding of generalization in

#quantummachinelearning.

Warm thanks to the team of @pablones8, Matthias C. Caro, @EliesMiquel, @FJSchreiber, and @charl_bp for this great collaboration.