A paper in

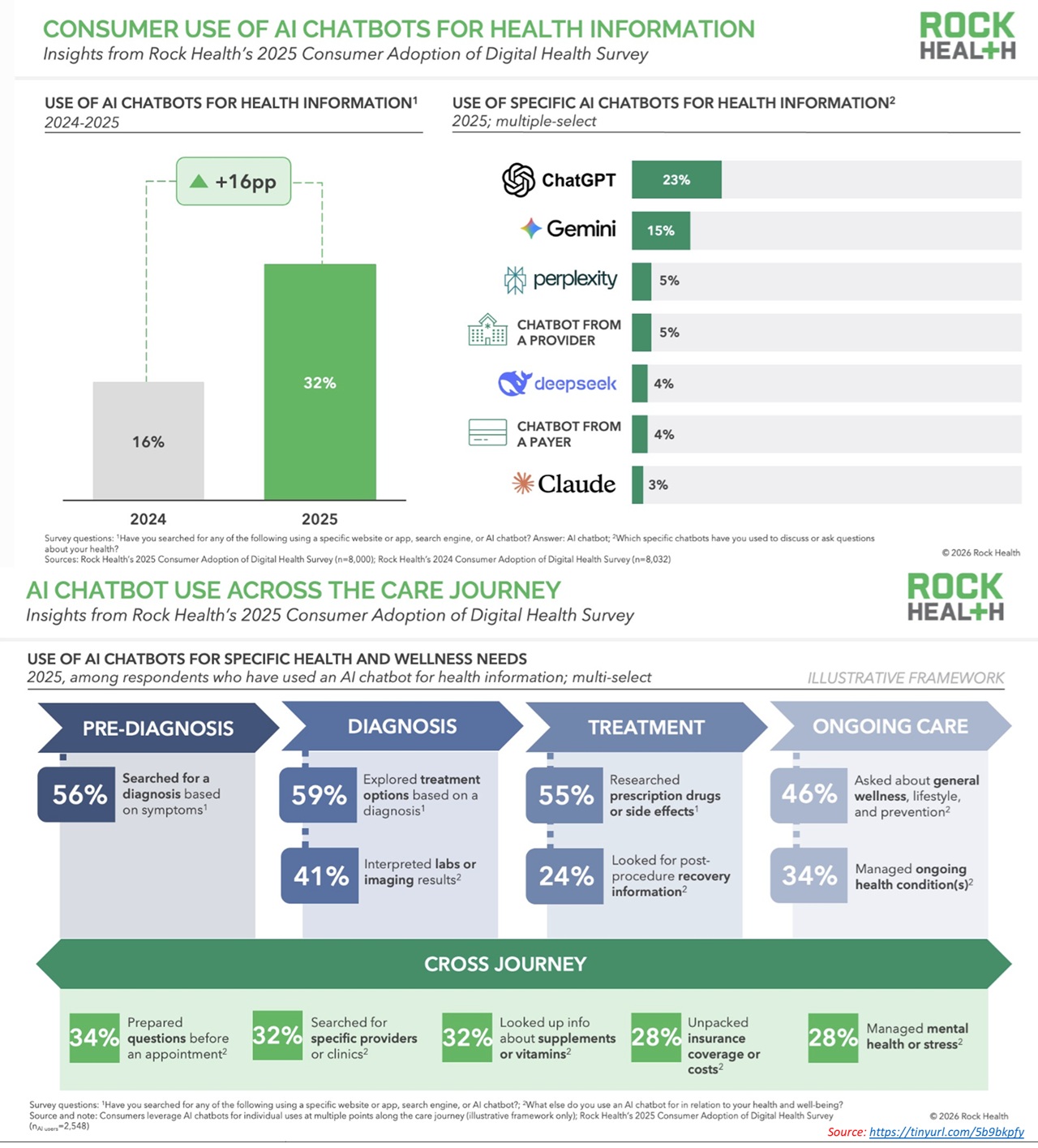

@JAMAPsych this week examines how chatbots respond to psychotic symptoms: paranoia, fixed false beliefs, loss of reality testing.

The findings are uncomfortable.

A substantial number of responses were inappropriate or only partly appropriate. Some replies echoed or reinforced false beliefs instead of gently questioning them or guiding the person toward help.s instead of gently questioning them or guiding the person toward help.

That difference is not academic. It is clinical.

In psychiatry, one holds two positions at the same time:

validate the distress or do not validate the delusion.

The entire therapeutic process rests on that balance.

Once a false belief is reinforced, even subtly, conviction strengthens, insight drops and help gets delayed. Families recognise this trajectory well. Doubt narrows into certainty. The window for early intervention begins to close.

Now consider the setting.

A private interface.

A responsive system.

No judgment.

Immediate replies.

People open up. They trust what comes back. The language is fluent. The tone is reassuring. The confidence is consistent.

Accuracy becomes harder to judge.

The concern is not occasional error.

The concern is plausible responses in clinically unsafe directions.

Psychosis is not managed by information alone.

It requires judgment when to support, when to reality-test, when to escalate, when to involve others. Those decisions carry responsibility.

Technology has a place.

Access improves. Stigma reduces. Early conversations become easier.

The boundary appears in vulnerable states: psychosis, suicidality, severe mood disturbance where nuance determines outcome.

Conversation is not care.

Loss of reality testing needs assessment. Risk needs evaluation. Treatment needs supervision. These cannot be approximated.

Tools can assist.

Accountability remains human. @ompsychiatrist

Reference study:

ja.ma/4rQVwM8

#MentalHealth #Psychiatry #AIinHealthcare #Psychosis #DigitalHealth