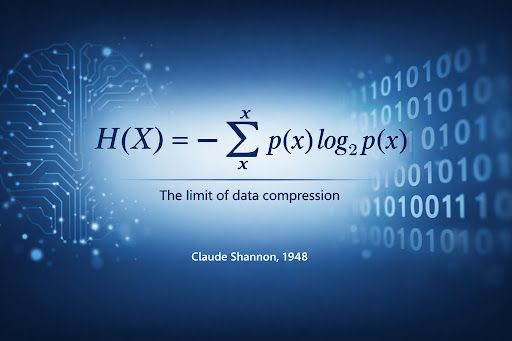

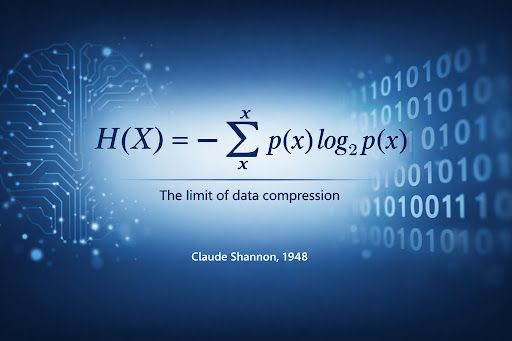

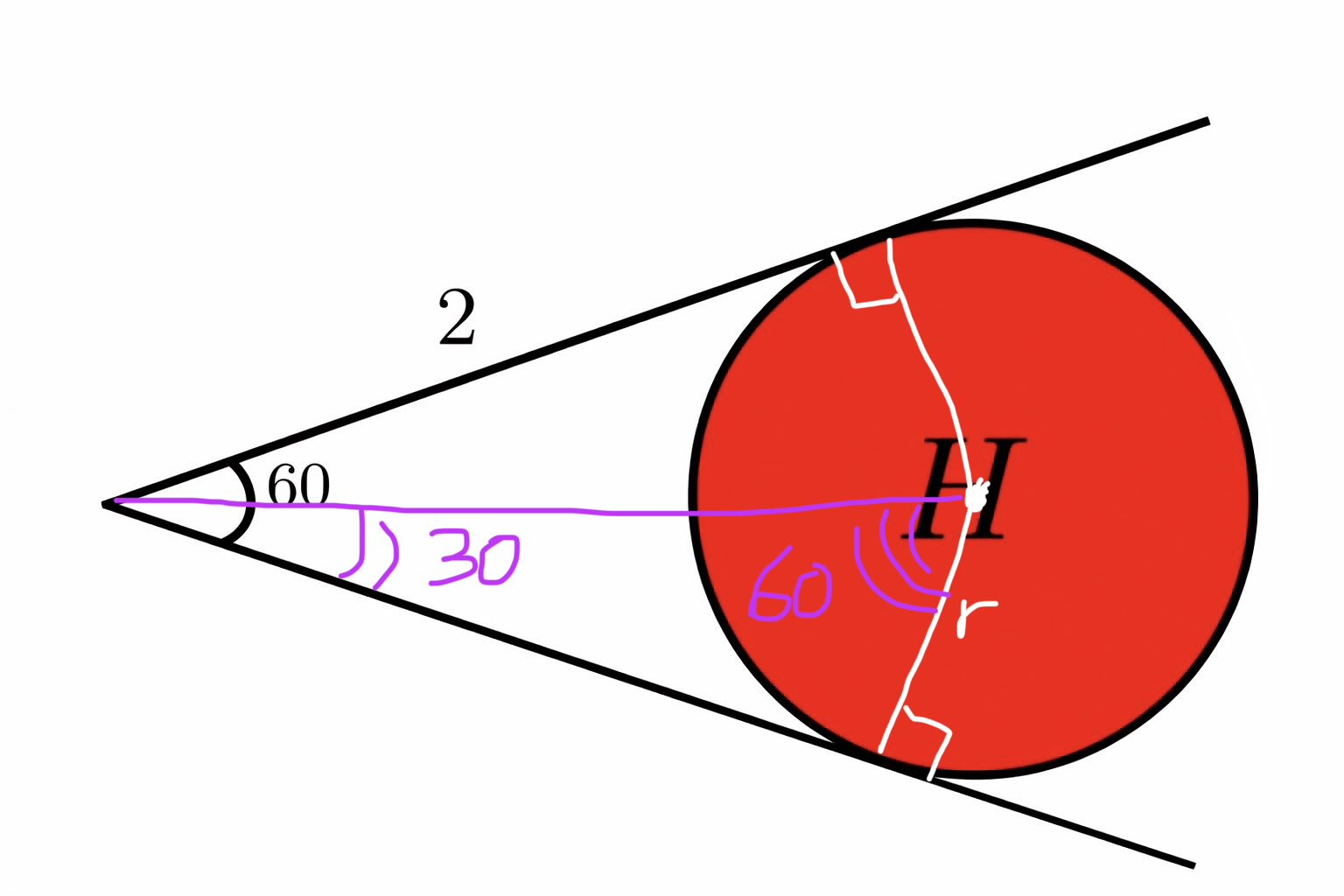

@H0H0v H = D.N.E.

Like others, this visual math prompt pleasantly stumped me given neither simplification or substitution (e.g., 1, 0, the infinities) appear to yield an existing solution

For example…

H^2 / H = H

But H = -H is illogical

H^2 = - (H^2) is also illogical

#math